Process Automation

Process AutomationSolo Lawyer's Automation — Intake to Case Proposal

How a solo law practice automated consultations: online intake, auto SMS, admin back-office, AI draft responses. Zero missed inquiries, full focus on real cases.

A small marketing team replaced gut-feel content ops with a small ontology-based judgment layer. Topic discovery, drafting, humanizing, platform rewrites, card news, publishing: one connected flow.

Scattered heuristics → Judgment layer (audience, tone, forbidden phrasing, platform) → Per-channel outputs (Instagram, Threads, X)

The real question was never 'who writes it.' It was 'by what rules do we judge it.'

Ad platforms, CRM, analytics, AI writing assistants. The marketing stack was full. The team still kept getting stuck in the same place: content. Topics got picked on gut feel. Writing standards drifted between people. Every platform needed manual reformatting. Adding draft automation didn't fix it. It just shifted the work. Someone still had to rewrite the AI output line by line, and the automation that was supposed to save time quietly created a new review workload.

“AI could write a draft, but making it sound like us and reshaping it for each platform took even longer. We added automation and ended up with more review work. The real problem wasn't writing ability. Our team didn't have a shared set of rules for deciding what to publish and how.”

— Content Operations Lead

A small ontology, then draft, humanize, platform rewrite, card news, and publish in one line

We started by changing the question. Instead of asking whether we could automate content, we asked whether we could turn the judgment rules into a system. On top of the OTOworks engine, we built a small ontology covering what small-team content ops actually needs: category, audience, platform, forbidden phrasing, tone, format. Then we wired the entire flow on top of it, from draft to publish.

First thing we did was break apart what had been running on gut feel. On the left is where things used to be. Everyone had different standards, so corrections kept piling up: 'not on-brand enough,' 'too stiff,' 'cut the length.' By Draft v7 nobody was sure what the original even said anymore. On the right is what changed. We took the judgment criteria apart (audience, tone, forbidden phrasing, format, platform, purpose) and locked them into the system. The AI started working within those rules from the very first draft. Instead of corrections stacking up, the first draft already fit.

Left: each person's sense of 'on-brand' stacking corrections. Right: judgment criteria locked in so the first draft already fits.

Those judgment criteria live in what we call the Ontology Decision Layer. Topic, audience, platform, purpose, tone, forbidden phrasing, all in one layer. On one side: topic discovery, context interpretation, and tone guardrails run in sequence. On the other side: Instagram carousels, Threads/X rewrites, and the publish queue. It's not a grand knowledge graph. It's a small concept system that a small team can start using right away. Once it was in place, the AI stopped behaving like a generic writing model. It started behaving more like a teammate working within a defined set of rules.

← Scroll horizontally to view the chart

A day in the life of the content marketer teammate, from topic input to multi-platform publish

Draft generation came next. By the time a topic reached the model, it already carried its audience, angle, and format. The output stopped being 'plausible but off-brand' and started coming back with context baked in. That's where the downstream rework began to shrink.

The hardest part was making the writing sound human. Overblown claims, filler phrases, that flat AI cadence. If any of it stays in, people rewrite the whole thing regardless of how fast the draft came out. So we pulled tone review and humanizing into their own step. The system checks against the forbidden phrasing, allowed tone, and brand vocabulary defined in the ontology, and keeps rewriting until the draft lines up. A good chunk of what people used to do by hand now finishes here.

Rewriting the same piece for three platforms was always the dullest part of the job. It's not copy-paste. It's reshaping the format within the same set of rules. The original draft goes through rewrite rules (length adjustment, tone alignment, CTA repositioning) and comes out as versions for Instagram, Threads, and X. One topic pass, three channel versions. If a version blows past the platform limit, it loops back and rewrites itself.

Same rules, different formats. Original draft → rewrite rules (length, tone, CTA) → per-channel outputs

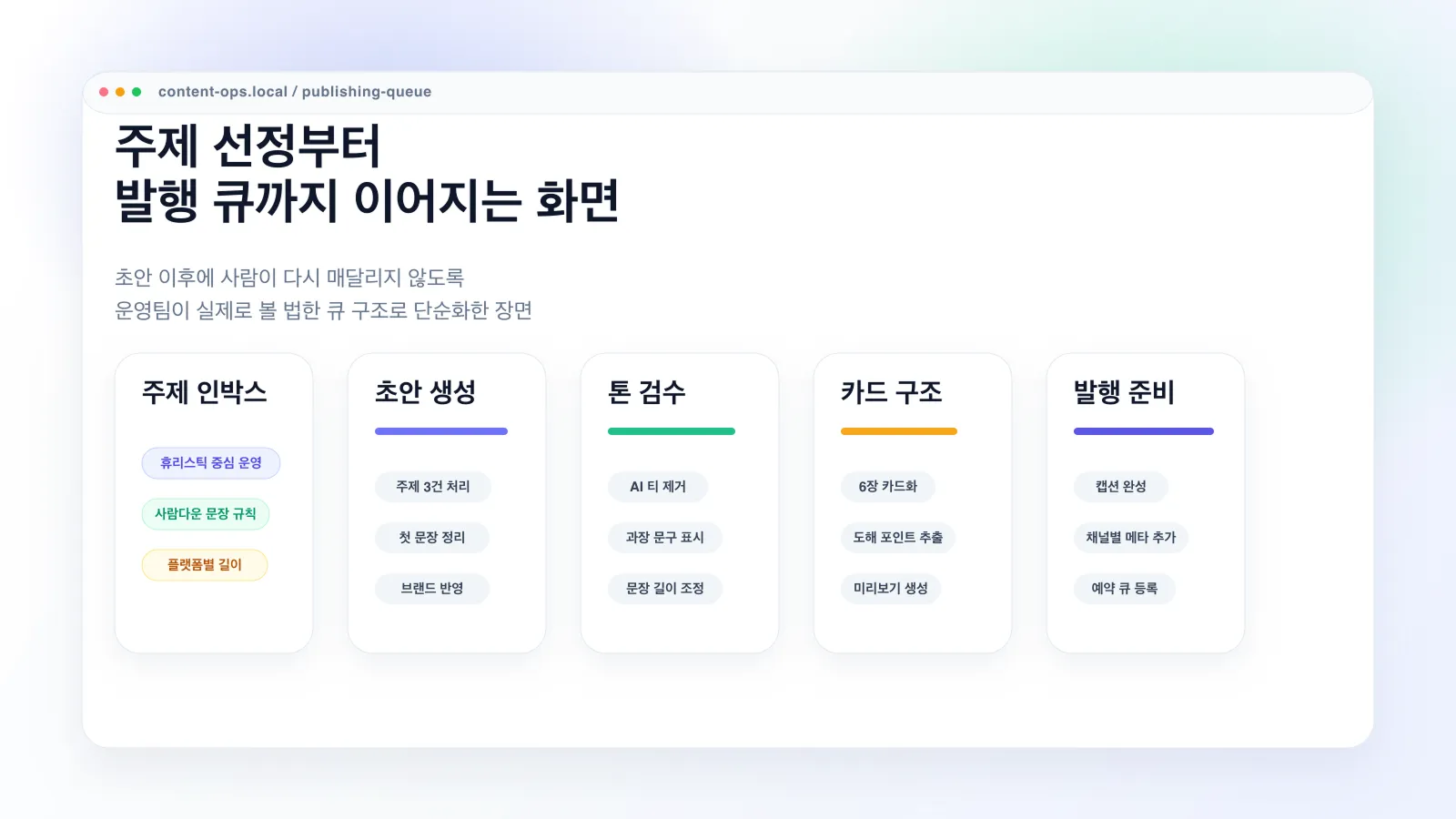

From the operator's side, it all came down to one question: can I see the whole day on one screen? Topics in the inbox, draft status, tone review pass/fail, card news check, publish queue. We simplified the operations view into a single pipeline so that no new manual work piles up after the draft stage. It stopped feeling like a tool that writes for you. It started feeling like handing over the ops flow itself.

Topic inbox → Draft → Tone review → Card structure → Publish ready. No new work piles up after the draft.

Content doesn't end with text. You need card news slides, and each slide needs a supporting image. That's where the designer bottleneck used to be worst. Now, once a draft passes review, it splits into slides, each slide gets an auto-generated illustration, and the whole set renders as 1080x1350 card news images on an HTML template. 'Copy is done but waiting on images' stopped being a thing.

Last step: publishing. Threads and Instagram run as one sequential flow. Two or more images and Instagram switches to carousel automatically. If the platform rejects an image upload, there's a JPG conversion retry and a hosting fallback chain so one small failure doesn't stop the whole run. We also added a pre-run environment check, so troubleshooting moved from 'fix it after it breaks' to 'catch it before it runs.'

Category, audience, format, forbidden phrasing, allowed tone, all organized into a minimal concept system. This is what makes the AI work like a teammate inside defined rules instead of a generic writing model.

Right after the draft, the system filters out overclaims, filler, and that flat AI rhythm. A good chunk of the manual rewriting that people used to do finishes here, and the downstream review cost drops.

One topic pass produces Instagram (2,200 chars), Threads (500 chars), and X (weighted 280) versions. If a version goes over the limit, it rewrites itself until the platform constraints and reading rhythm both fit.

Draft, slide split, image generation, template rendering, platform publish. One pipeline. A JPG format-retry and hosting fallback absorb upload failures so one hiccup doesn't stall everything.

Once the judgment rules were in the system, the bottleneck moved somewhere else.

The result isn't 'content got auto-generated.' It's closer to: the judgment layer for content ops became a system. With that in place, the team now spends more time picking what to publish than rewriting drafts.

Category tagging, audience context, and allowed tone all live in the ontology. Whoever is running content that week, the tone and output stay consistent. Handovers no longer throw things off.

Before, only drafting was automated and everything else came back to humans. Now tone review, platform rewrites, card news, and publishing are in the same flow. The rewrite step that used to be the biggest bottleneck isn't where work piles up anymore.

Manually rewriting the same piece for Instagram, Threads, and X is gone. The rewrites run automatically against each platform's limits and reading rhythm. People just review.

The most useful time for a content marketer isn't 'how do I phrase this?' It's 'what should we publish this week?' The system took over the execution part, and the team's time moved up a level.

“I figured it would be another content automation tool. It wasn't. The judgment rules were already in the system, so we stopped having to tear apart the AI output and rewrite everything. It honestly feels like we hired another content marketer. We spend more time now deciding what to publish than writing it.”

Content Operations LeadMarketing Manager

Process Automation

Process AutomationHow a solo law practice automated consultations: online intake, auto SMS, admin back-office, AI draft responses. Zero missed inquiries, full focus on real cases.

Process Automation

Process Automation1+ hour daily purchase order processing reduced to one click. Image-embedded documents, vendor-specific Excel generation, price calculations, and ERP integration, all automated for a mid-sized F&B manufacturer.

Process Automation

Process AutomationFull direct sourcing pipeline automated with AI — sourcing time from 20h to 2h, list automation rate 90%+, and coffee chat conversion rate 5x increase. An AI headhunter case study.

If your team keeps saying "we tried AI but we still rewrite everything," the problem probably isn't writing. It's the judgment rules. Let's talk through it.