Process Automation

Process AutomationEveryone was losing time to it, and one person was completely buried

90% reduction in internal inquiry time with 3-second average responses. In-house AI chatbot handling government regulations with full security tracking.

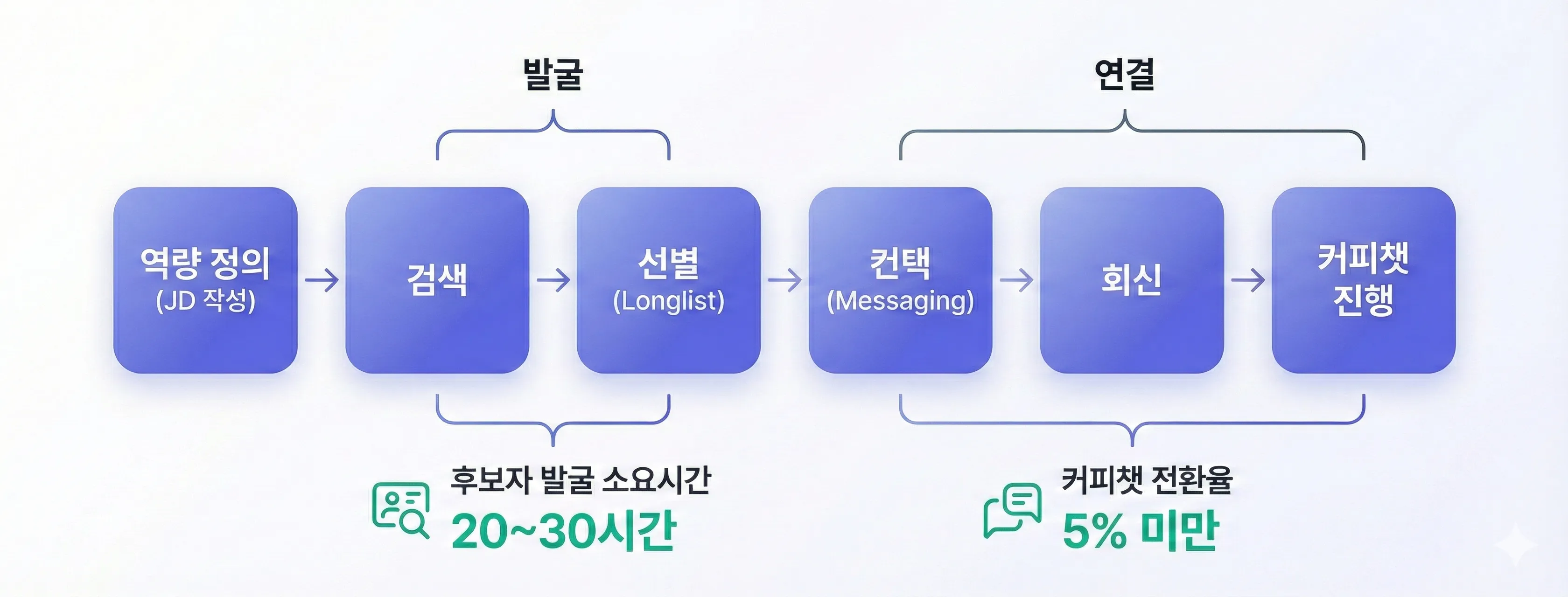

Full direct sourcing pipeline automated with AI — sourcing time from 20h to 2h, list automation rate 90%+, and coffee chat conversion rate 5x increase. An AI headhunter case study.

The reality of direct sourcing with 1-2 recruiters

This client is an HR tech startup building AI-powered recruiting solutions. Their customers kept telling them the same story. They wanted to hire senior and lead-level talent, but their 1-2 recruiters were spending weeks searching LinkedIn, GitHub, and blogs to find candidates. Even after reaching out, hardly anyone replied.

“A TA lead told us: 'Even after spending 20 hours on a single position, we only get 2 coffee chats at most. Out of 200 profiles, fewer than 5 are worth reaching out to, and if even 1 out of those 5 replies, we consider ourselves lucky. This drags on and positions stay open for 3+ months.' After hearing that, we knew exactly what we needed to build.”

— HR Tech Startup CEO

Translating a hiring manager's gut feel into a system

What the client wanted wasn't a talent search engine. They wanted to capture the process where a hiring manager looks at a profile and decides 'this person could solve our problem.' From defining the role to sourcing, evaluation, messaging, and coffee chat conversion — it was a project to automate the entire sourcing pipeline.

Direct sourcing means companies proactively reach out to talent instead of posting job ads and waiting for applicants. Senior and lead-level talent rarely appears on the job market, so companies have no choice but to make the first move.

The problem is that the entire process is manual — LinkedIn searches, profile analysis, skill assessment, message writing, and scheduling. The image below shows the full direct sourcing pipeline automated in this project.

Complete pipeline from multi-platform data collection through AI evaluation to automated outreach

Before diving into the details, take a look at how this pipeline actually works in practice. This SaaS platform demo shows the entire flow — from position definition to candidate search, evaluation, and outreach — all handled through a chat interface in one place.

From B2B list delivery to a self-service SaaS sourcing platform

The first meeting wasn't at the client's office — it started beside the TA team of a company actually struggling with hiring. We watched a recruiter opening LinkedIn profiles one by one from morning, copying names and experience into a spreadsheet.

Then we asked a hiring manager, "What kind of person do you want?" and stories poured out that weren't in any JD. "Someone who's personally dealt with large-scale traffic at least once. Not someone who follows the manual, but someone who can make judgment calls during incidents." None of that context was in the JD. Closing that gap was the starting point of this project.

The first thing we built was a 'Role Definition Engine.' When a hiring manager inputs their expectations in natural language, the AI breaks it down into must-haves, nice-to-haves, and risks.

Enter "They need to solve this problem within 6 months of joining" and you get items like '3+ years distributed systems ops experience,' 'open-source contribution history,' 'risk: management-only background with no hands-on.' It also defines 'skill signals' to verify each item, down to what specific projects or metrics confirm 'large-scale traffic experience.'

It was the first time the hiring manager's mental model of 'a good hire' was actually written down.

To get accurate criteria out of this engine, we couldn't rely on gut-feel prompting. We built a system that automatically generates 100+ prompt variations combining Chain-of-Thought, Few-shot, and Negative prompts, then has an in-house recruiting expert team qualitatively evaluate each result. We selected the highest-accuracy combination and built it into the system.

Next was candidate discovery, and this is where we hit the biggest technical challenge. Good people aren't gathered in one place. LinkedIn profiles, GitHub commits, tech blogs, conference talks, open-source contributions — all scattered across different platforms.

Just collecting data wasn't enough. The key was 'competency metadata enrichment.'

We stitch scattered data fragments into a single candidate profile and layer capability signals on top. How much someone contributed to what scale of projects on GitHub, what depth of technical writing they do on their blog. Stitch these fragments together and capabilities invisible on a resume alone start to emerge.

← Scroll horizontally to view the chart

Full flow of the direct sourcing automation pipeline

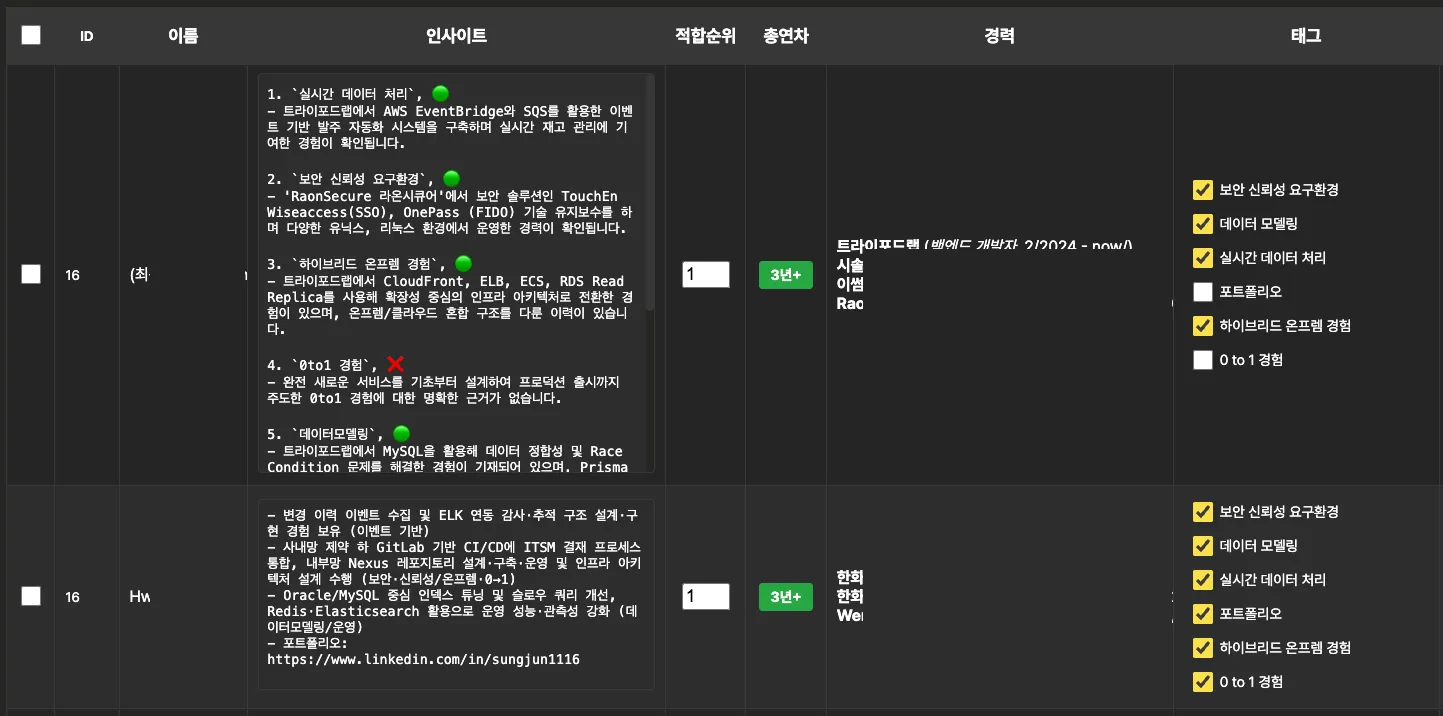

Once the data was collected, the real challenge began. For the LLM-based inference system to evaluate candidates, the AI needed to precisely understand 'what this person did, when, and where.'

But LLMs have a weak sense of time. A candidate with a tenure from 2020-2022 would be evaluated based on press releases from 2018-2020 — a pattern that kept repeating. It was a problem validated by the academic community as well.

To solve it, we had to rework the RAG (Retrieval-Augmented Generation) pipeline itself. We attached time-series metadata — employment start and end dates, time buckets — to the embeddings, and applied hard filters at retrieval to return only chunks overlapping with the requested employment period. With this in place, cases of the model citing irrelevant historical data as evidence completely disappeared.

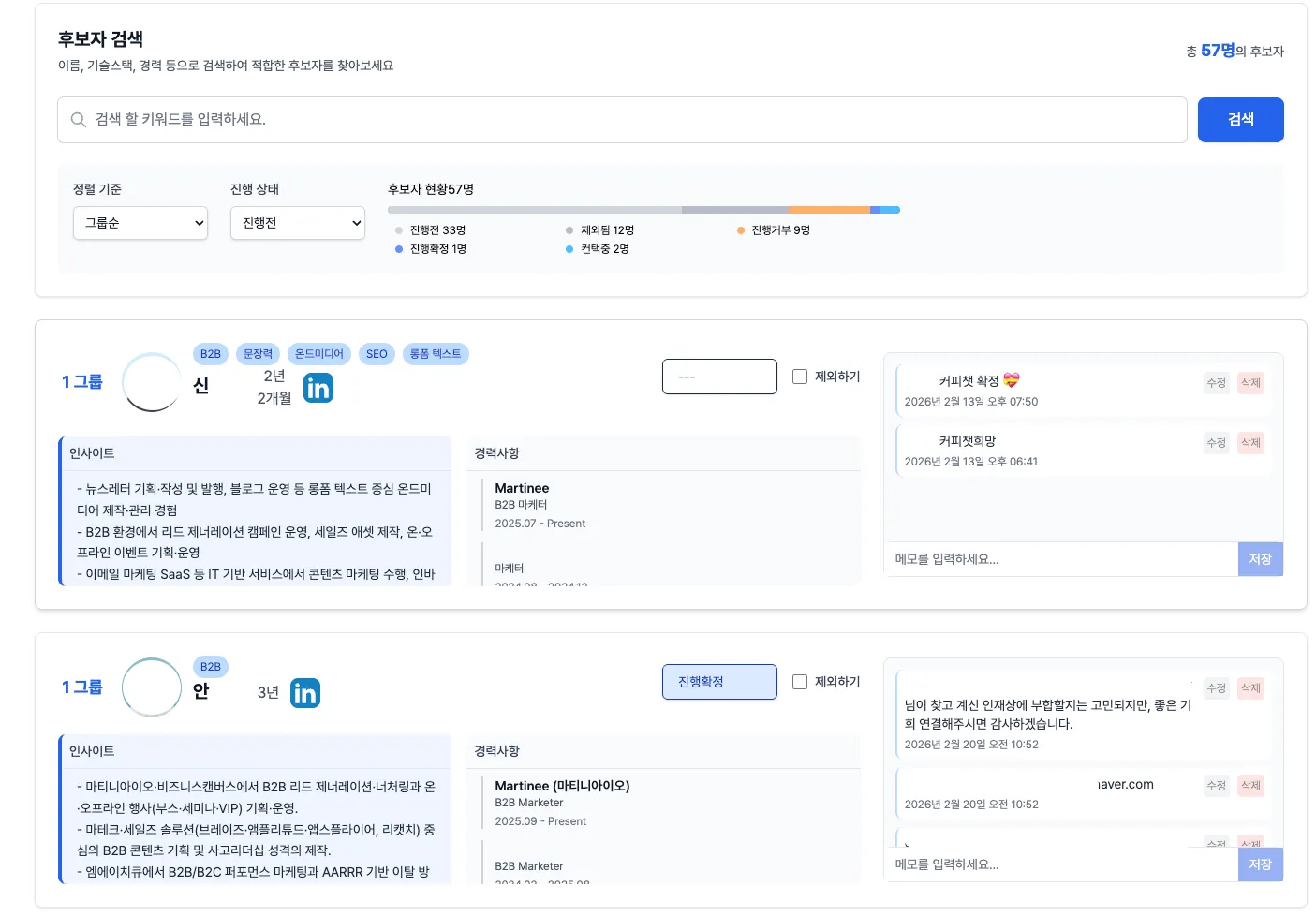

Candidate shortlist dashboard generated by LLM + RAG-based inference system

After solving the time-series problem, we hit context window limits. As a candidate's competency tags grew, the evidence context for each tag increased non-linearly. Exceeding the context limits of commercial models became frequent.

We solved it three ways. First, we decomposed queries by tag and ran parallel retrievals. Second, for data with long, repetitive content like news articles, we used an LLM to compress down to key sentences and keywords before embedding. Third, we placed the query at the end of the prompt to mitigate the 'Lost in the Middle' phenomenon.

On top of that, we introduced a multi-model ensemble — one model reasons, another cross-validates. This filters out hallucinations and biases from any single model, producing evaluation results that hiring managers can genuinely understand and trust.

← Scroll horizontally to view the chart

Multi-model ensemble cross-validation architecture

Collecting candidates is an engineering problem, but evaluating them is different. We needed to capture that intuition where a hiring manager looks at a profile and says 'not this person' within 10 seconds. Simple keyword matching doesn't work. '5 years of Python' on a profile doesn't make someone a good backend engineer.

What we built is a 'job performance prediction engine.' For each rubric item, it maps evidence collected from a candidate's public data and generates both a score and a summary.

'Does this candidate have distributed systems experience?' → 'Key committer on a Kubernetes-based microservices project for 2 years, 3 blog posts on handling large-scale traffic.' Evaluation with evidence attached.

Every decision was designed to record 'why.' Why this candidate made the short-list, why that one was excluded. When you tell a hiring manager 'it's automated,' skepticism is the default. We had to show them the actual basis for every call.

AI evaluation report showing 'why this person' with evidence for each candidate

Even with a short-list, it's useless if candidates won't meet you. Template message reply rates are usually below 5%. That's 95% of effort going nowhere.

So we built a hyper-personalized message engine. Based on a candidate's public activity data, it automatically generates messages with 'why you' (mentioning this candidate's unique strengths) and 'why now' (the context of why this team is opening this position).

"I was impressed by your recent blog post on distributed tracing. Our team is dealing with exactly that problem right now." Messages at that level for 30 candidates, done in 20-30 minutes.

After a reply, the auto-conversion pipeline kicks in. Suggested available time slots, a 1-page candidate summary auto-shared with the hiring manager before the coffee chat. Candidates get the impression 'this team is well-prepared,' and hiring managers can understand why this candidate is good within 10 minutes.

Auto-generated hyper-personalized messages based on candidates' public activity

After automating search and filtering, the client's next expansion area was 'actual connection with candidates.' It started as a B2B model — we built the shortlist and delivered it. But as demand grew, the client began transitioning to a SaaS tool that lets companies run their own sourcing. The structure allows everything from position definition to candidate search, evaluation, and outreach in one place, through an interface as intuitive as a chat UI. It's proven technology with a usable product layer on top.

Extraction accuracy optimized through 100+ prompt combination experiments. Natural language input from hiring managers is auto-structured into Must-have, Nice-to-have, and Risk categories.

Scattered activity data across platforms is stitched together and enriched with capability signals. Capabilities invisible on a resume are revealed.

Employment start and end date metadata is attached to embeddings, with time-based filters enforced at retrieval. LLM referencing data from the wrong era is completely prevented.

One model reasons on competencies while another cross-validates. This filters out hallucinations and biases from any single model, producing results that hold up when a human actually reviews them.

Summarizes 'why this person can solve our problem' for each candidate. Projects, contributions, and writings found from public data serve as evidence.

Every decision — why this candidate was included, why that one was excluded — comes with a reason. Ask 'why?' and you get an answer on the spot.

Messages with 'why you' and 'why now' are auto-generated based on each candidate's public activity. 30 candidates' messages done in 20-30 minutes.

From scheduling suggestions to auto-shared candidate briefs and feedback collection after reply. Reduces the gap between interest and actual meeting.

Instead of searching LinkedIn, they spend time discussing why a candidate is the right fit

After the system was up and running, changes from companies actually using this platform started coming back. "The TA lead doesn't open LinkedIn first thing in the morning anymore. Instead, they review the short-list the AI built and spend their time discussing with the hiring manager 'why this candidate's experience matters for us.'" It wasn't about saving time — what the TA team actually does day-to-day changed.

Days used to start with LinkedIn searches and spreadsheet organizing. Now they start by reviewing the AI-built short-list and discussing with hiring managers 'what about this candidate fits our team.' They went from being searchers to being evaluators.

Before, asking a hiring manager to 'review these profiles' was stressful. When would they review dozens of profiles? Now the AI presents a short-list of 5-10 candidates with evidence, so hiring managers can give meaningful feedback in 10 minutes. It was the starting point for hiring becoming a team effort, not just HR's job.

Template message reply rates were below 5%, but with hyper-personalized messages, they noticeably increased. Candidates started replying saying 'You actually read my blog post?' Outreach went from spam to a meaningful proposal.

It started as a B2B model where we built the shortlists and delivered them. As the system stabilized, the client transitioned to a SaaS tool customers can run themselves. Everything from position definition to outreach is handled in one place through an interface as intuitive as a chat UI. It's proven technology with a usable product layer on top.

Post-coffee-chat feedback — why we proceeded, why we didn't — accumulates in the system, automatically calibrating evaluation criteria and messaging strategy for the next cycle. Short-list quality at 6 months is noticeably better than at 3 months.

“'AI evaluating people? Really?' That was the first reaction. Hiring felt like something that fundamentally needed a human eye. But OTOworks sat with our client's TA team, dissected their actual workflow, and what we thought was gut feel turned out to be patterns. The thing that clinched it — every recommendation comes with a clear 'why this person.' Not 'here are 30 profiles, take a look when you can.' It lays out why this person's specific experience maps to your problem. That's when hiring managers actually started engaging.”

HR Tech Startup CEOCEO

Process Automation

Process Automation90% reduction in internal inquiry time with 3-second average responses. In-house AI chatbot handling government regulations with full security tracking.

PC Automation

PC Automation30 minutes to 1 minute, 50+ clicks to just 3. Monthly EBS exam paper downloads fully automated for an academy instructor, from login to organized folders.

Document Automation

Document Automation12 hours of document sorting cut to 7 minutes with 98% accuracy. AI-powered classification freed an evaluation consulting firm to focus on actual assessments.

Do you think "Our work requires human judgment, so it can't be automated"? This client thought the same at first. Let's talk — solutions become clear when we discuss together.